The adoption of agentic artificial intelligence (AI) in property and casualty (P&C) insurance is typically framed as a technology transformation. While agentic AI is certainly reshaping the way workflows are executed, its most lasting impact will be on how insurers build, retain, and scale expertise.

And the urgency around new ways to cultivate expertise is only growing. According to the IBM Institute for Business Value, about 40% of the global workforce will need to reskill over the next three years due to AI and automation. This underscores how critical learning speed and adaptability are when it comes to long-term performance.

For insurers, it shows that rather than focusing solely on new tools, it may be even more important to ensure people can continuously develop judgment alongside them. By treating agentic AI as a continuous learning system in addition to an efficiency engine, organizations can strengthen human expertise rather than erode it.

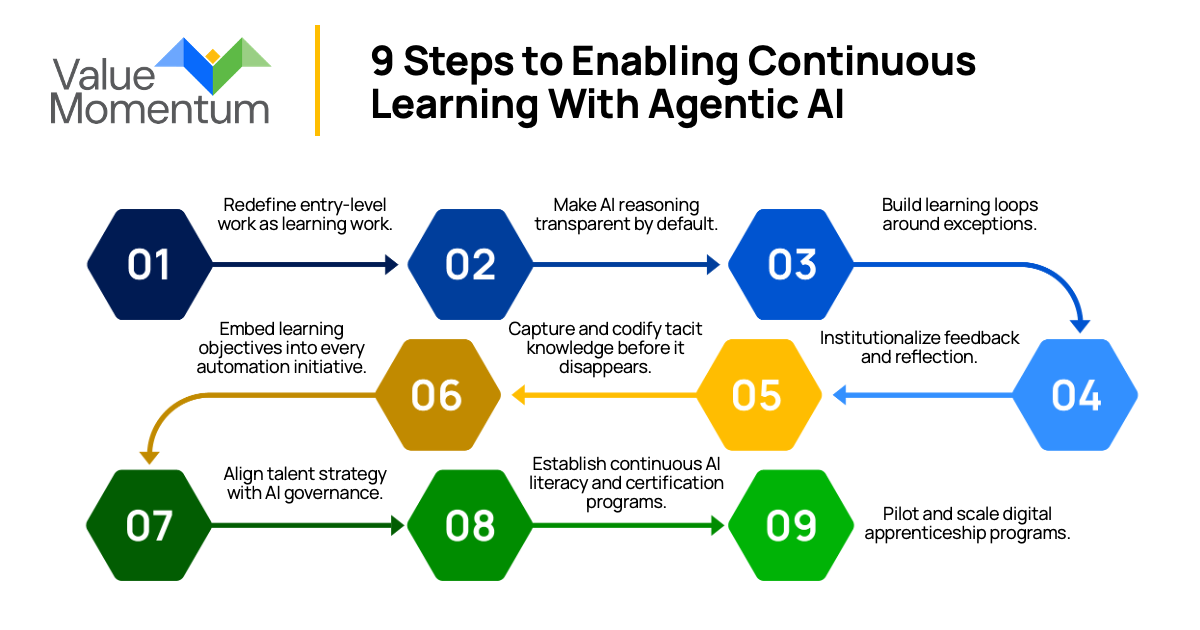

Enabling Continuous Learning With Agentic AI

As agentic systems absorb foundational work, learning no longer emerges automatically through repetition and tenure. Insurers must intentionally embed learning into how work is structured, how systems are designed, and how people progress along their career paths.

This need is already evident across the industry. Talent shortage is one of the top barriers keeping insurers from fully realizing the value of AI initiatives. When technology adoption moves faster than workforce readiness, learning cannot be treated as a downstream outcome of transformation. It must become a deliberate, operational capability.

The nine steps below outline how insurers can redesign work, governance, and talent models so that agentic AI serves as a learning partner, not a replacement for the tasks that build expertise.

1. Redefine entry-level work as learning work.

As agentic AI takes over routine execution, entry-level roles should no longer be designed solely around process throughput. Instead, they should function as digital apprenticeships that emphasize learning how systems reason, where they struggle, and how decisions evolve.

Early-career employees gain expertise by auditing AI outputs, validating recommendations, and helping refine evaluation criteria. In underwriting, this may involve testing how risk summaries are generated. In claims, it could mean reviewing triage logic and providing structured feedback. The goal is not to slow automation, but to ensure learning remains embedded in how work begins.

2. Make AI reasoning transparent by default.

Explainability is often treated as a compliance requirement, but it is also a powerful learning tool. When AI systems reveal why a claim was routed, why a risk was flagged, or why an exception occurred, employees learn how decisions are formed in real time.

Transparent reasoning enables professionals to form judgments more quickly, connect outcomes to inputs, and intervene with confidence. The same mechanisms that support regulatory traceability can also teach employees how systems think, turning everyday workflows into continuous learning moments.

3. Build learning loops around exceptions.

Automation excels at handling predictable work while human expertise grows when it encounters ambiguity. The cases that an AI agent cannot confidently resolve should be treated as opportunities for learning rather than as process failures.

By creating structured pathways for employees to analyze exceptions, insurers sharpen judgment while improving system reliability. Each unresolved case becomes a shared learning moment, strengthening both human decision-making and model performance over time.

4. Institutionalize feedback and reflection.

Learning does not happen through output alone; it requires reflection. As AI becomes part of daily operations, insurers must formalize feedback cycles that allow humans and systems to learn together.

Short retrospectives, review sessions, or digital feedback loops give teams space to examine outcomes, identify reasoning gaps, and refine approaches. Over time, these human–AI retrospectives form a continuous improvement engine rather than isolated corrections.

5. Capture and codify tacit knowledge before it disappears.

Much of insurance expertise lives in judgment calls, edge-case handling, and unwritten decision logic. As roles evolve and senior professionals retire, this tacit knowledge is at risk of being lost.

Agentic systems provide an opportunity to preserve it. By capturing reasoning, documenting why decisions were made, and logging expert feedback, insurers can create living knowledge bases that inform training, onboarding, and system improvement — ensuring expertise scales rather than fades.

6. Embed learning objectives into every automation initiative.

Automation programs are often measured only by efficiency gains. To avoid eroding capability, insurers should define learning outcomes alongside operational metrics.

Every AI initiative should answer questions such as: How will this system help employees better understand underwriting or claims logic? What new judgment capabilities will it develop? How will human feedback improve system reasoning? Embedding these objectives ensures automation strengthens expertise rather than bypassing it.

7. Align talent strategy with AI governance.

As AI plays a greater role in regulated decision-making, learning and governance become inseparable. Employees who interact with AI systems must understand how decisions are made, what risks are introduced, and how to intervene responsibly.

Allying HR, compliance, and technology teams ensures learning, ethics, and oversight evolve together. This alignment supports regulatory readiness while building a workforce capable of exercising judgment in AI-assisted environments.

8. Establish continuous AI literacy and certification programs.

One-time training is not enough in an environment where systems and workflows evolve continuously. Insurers should build structured learning paths that develop shared fluency across business and technical teams.

Programs focused on AI literacy, system oversight, and governance fundamentals ensure employees can engage meaningfully with agentic systems from day one and continue developing as those systems mature.

9. Pilot and scale digital apprenticeship programs.

Rather than attempting enterprise-wide change all at once, insurers should start small. Pilot digital apprenticeship models in functions such as claims intake, underwriting support, or policy servicing, pairing early-career staff with AI assistants.

By measuring not just speed and accuracy, but confidence, reasoning quality, and adaptability, insurers can refine these programs before scaling them across the organization. This disciplined approach turns learning into a measurable, repeatable capability.

Agentic AI cannot be treated as a standalone technology initiative. Without an intentional learning architecture, efficiency will scale faster than expertise, leaving a major talent gap that will stunt insurers abilities to grow in its wake.

Designing Learning as a Core Insurance Capability

Without intentional design behind their agentic AI initiatives, insurers risk operating models that are faster and more consistent, yet increasingly dependent on systems few people fully understand. Insurers that treat learning as a core capability rather than a byproduct of automation can avoid this outcome.

By redesigning entry-level work, making AI reasoning visible, institutionalizing feedback, and aligning learning with governance, organizations can ensure human judgment evolves alongside intelligent systems — preserving resilience, accountability, and long-term performance.

To explore this transformation in greater depth, read my whitepaper, “The Vanishing Apprenticeship: Rebuilding Career Pathways in the Age of Agentic AI,” which examines how insurers can redesign learning, careers, and governance to thrive in an AI-driven future.